Last Updated on May 31, 2015 by Dave Farquhar

A commenter asked me last week if I really believe the lock in a web browser means something.

I’ve configured and tested and reviewed hundreds of web servers over the years, so I certainly hope it does. I spend a lot more time looking at these connections from the server side, but it means I understand what I’m seeing when I look at it from the web browser too.

So here’s how to use it to verify your web connections are secure, if you want to go beyond the lock-good, broken-lock-bad mantra.

I’m going to use Chrome for the demonstration, because it tells you more than the other browsers do. But the same methods work with all of the other major browsers too, they just don’t share as many of the nitty-gritty details that give a CISSP flashbacks to that 6-hour, 250-question test.

Here’s the lock in action. I took this screenshot right after I clicked on the lock. The first line, “Identity verified,” says the owner of the site really is who it says it is. If the site has been hijacked, you’ll see something else, and that lock won’t be green. There’s a second tab you can click on to get more detail.

And here’s what the other tab looks like:

The identity is verified. Good. It’s using 256-bit TLS 1.2. Good. These days 128-bit encryption is acceptable; 40-bit encryption has been obsolete since the 1990s. TLS is better than SSL and higher numbers are better. SSL is known to be bad now but better than nothing. Chacha20-Poly1305 is based on AES encryption, which is the current industry standard. More frequently you’ll see sites using straight AES_CBC. There are some who believe the derivative Google is using is safer, but they may very well be using the derivative for political reasons at least as much as technical. Interestingly, Microsoft and Yahoo, who are mad at the government for the same reason Google is, are still using AES. Then again, both of them dislike Google too, so they may be waiting as long as possible to validate what Google is doing.

ECDHE_ECDSA is the algorithm the site uses to establish the encrypted connection. Most sites use RSA, because RSA is about four times faster. Google isn’t shy about spending money on security.

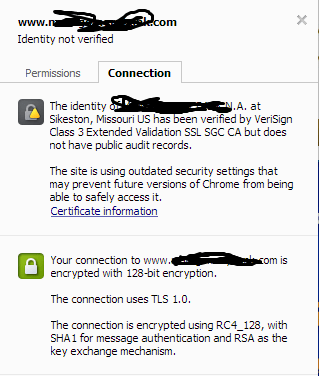

That’s a site that holds nothing back. Here’s a site that’s doing just about the bare minimum that you can get by with in 2005.

What don’t I like? It’s a 128-bit connection, TLS 1.0, and the nearly obsolete RC4 encryption algorithm. RSA is fine for key exchange; SHA1 for authentication is better than MD5 but hardly revolutionary. They’re doing enough to make an auditor check the right boxes every year. As you’ll note, Chrome isn’t thrilled either–saying “This site is using outdated security settings that may prevent future versions of Chrome from being able to safely access it.” Google pushes the envelope on stuff like this, which is why I recommend Chrome, especially if you don’t do this stuff for a living like I do. I scribbled out the names to avoid totally throwing a small Missouri business under the bus.

If you want to see bad, connect to my site (yes, mine) via https: https://dfarq.homeip.net, then click where the lock used to be. There you’ll see a self-signed certificate. I generated it myself. Nobody aside from me can verify much of anything in it, which is why your browser throws a fit when it connects to it. It’s good enough for me to trust for my purposes–allowing me to connect to the site remotely and give my username and password without anyone snooping in, which is why it’s there, but I don’t expect anyone else to trust it for anything. I’ll replace it in the near future with something trustworthy but it’s good for illustration purposes now. If you visit a site you normally trust and see something that looks like mine, then you know something is wrong.

So, yes, there’s a lot going on behind that lock. I don’t expect anyone I work with to understand anything beyond lock good, broken lock bad, but yes, there’s definitely a reason the browser displays what it displays, and if you look at the outgoing traffic with a packet capture, you’ll be able to see the difference. You’ll be able to read straight http traffic, but encrypted https traffic will be incomprehensible.

Admittedly, I’m not a cryptographer, and I consider it a lucky break that I’ve never had to actually encrypt or decrypt a message to pass a certification exam. I have a good enough memory to remember which algorithms are safe to use, which ones are nearing end of life, and which ones time has passed by. I know enough that I’m not accepting on blind faith that cryptography works, but I’m also very glad that developing them and testing them is someone else’s job. The math is well beyond me, but having heard some of the people who invented this stuff speak, I know cryptography isn’t a sham.

Tying this back to the Lenovo scandal, the reason it was bad is because decoding the self-signed certificate that Lenovo loaded turned out to be very simple, so it doesn’t take much to create nefarious web sites that any Lenovo computer will trust as legitimate. You necessarily even have to get in between the Lenovo machine and a legitimate site in order to do bad things. Anyone who can set up a simple web site would be able to misuse this information. And the only way to foil it is to click on the lock in the browser and see names you don’t recognize.

That’s why I hope Lenovo learned its lesson and other computer makers learn from Lenovo’s mistakes.

David Farquhar is a computer security professional, entrepreneur, and author. He has written professionally about computers since 1991, so he was writing about retro computers when they were still new. He has been working in IT professionally since 1994 and has specialized in vulnerability management since 2013. He holds Security+ and CISSP certifications. Today he blogs five times a week, mostly about retro computers and retro gaming covering the time period from 1975 to 2000.